Every AI system that has ever impressed you was built on thousands of hours of human judgment.

ChatGPT doesn’t inherently know which responses are helpful. Tesla’s self-driving system doesn’t automatically recognize pedestrians. Claude doesn’t instinctively distinguish between a precise answer and one that sounds confident but is wrong.

Humans taught them.

And those humans were paid.

That process is data annotation.

Data annotation teaches models what “correct” looks like-by rating outputs, tagging content, and catching errors that automated systems consistently miss. This work is not mindless clicking. It requires judgment, expertise, and consistency, which is why high-quality annotation commands professional rates rather than gig-economy wages.

This guide explains what data annotation actually involves: the major annotation types, the skills each requires, how compensation scales with expertise, and the practical path from application to earning.

No vague promises about “shaping the future of AI.” Just the operational reality of remote, expertise-driven work that fits around your life if you have the right skills.

What is data annotation?

Data annotation is the process of adding structured labels, ratings, and corrections to raw data so AI systems can learn from it. You are effectively grading AI homework.

When a chatbot produces an answer, a human evaluates whether it is helpful, accurate, misleading, or harmful.

When an autonomous vehicle processes camera footage, a human marks where pedestrians actually are.

That human judgment is valuable because automation cannot reliably replicate it.

Annotation work spans every data type modern AI systems consume: text, images, video, audio, code, and specialized scientific formats. Your annotations become the training signal that determines how models behave in the real world.

When you mark a response as “helpful but factually incorrect,” the model learns to prioritize accuracy.

When you outline a tumor boundary in a radiology scan, diagnostic AI learns what cancer actually looks like.

Real-world applications

Every major AI breakthrough relies on human annotation before deployment.

Chatbot response evaluation

You assess whether AI-generated answers are helpful, accurate, or potentially harmful. When a model offers medical advice that sounds reasonable but could cause harm, someone must catch it. That evaluation directly shapes how tools like ChatGPT and Claude respond to millions of users.

Autonomous vehicle training

You draw bounding boxes around pedestrians, cyclists, vehicles, and hazards in camera footage or LiDAR data. A two-pixel error in pedestrian labeling can mean the difference between braking and failing to brake. That precision is why this work requires sustained focus, not speed.

Voice assistant development

You transcribe speech, identify speakers, and label user intent. When someone says, “play something relaxing,” you teach the system whether that means music, ambient sounds, or a podcast.

Code generation safety

You review AI-written code from tools like GitHub Copilot, flagging security flaws, logical errors, and violations of best practices. A missed SQL injection vulnerability in training data teaches the model to generate insecure code.

Medical image analysis

You trace tumor boundaries, distinguish benign from malignant findings, and identify anatomical structures. These annotations train diagnostic systems used by healthcare professionals. The stakes require domain knowledge, not surface-level pattern recognition.

Financial and regulatory compliance

You analyze contracts and regulatory documents to flag compliance risks, teaching AI systems to recognize legal issues. Knowledge of GAAP, IFRS, or regulatory frameworks allows you to catch problems generalist annotators would miss.

Each application creates specialized tracks where deep expertise translates directly into remote income. The technical complexity explains why this work pays professional rates.

Types of data annotation work and the skills you need

Your earning potential depends on your expertise. Data annotation spans multiple formats and techniques, each requiring different skills and offering different compensation levels.

At Coral Mountain, generalist work starts at $20/hour, coding and STEM work starts at $40/hour, and projects requiring professional credentials in law, finance, or medicine typically start at $50/hour or more.

“As a coder working part-time, I’m earning over $6,000 a month. Coral Mountain allowed me to pay off my debts and buy my first home at 27.”

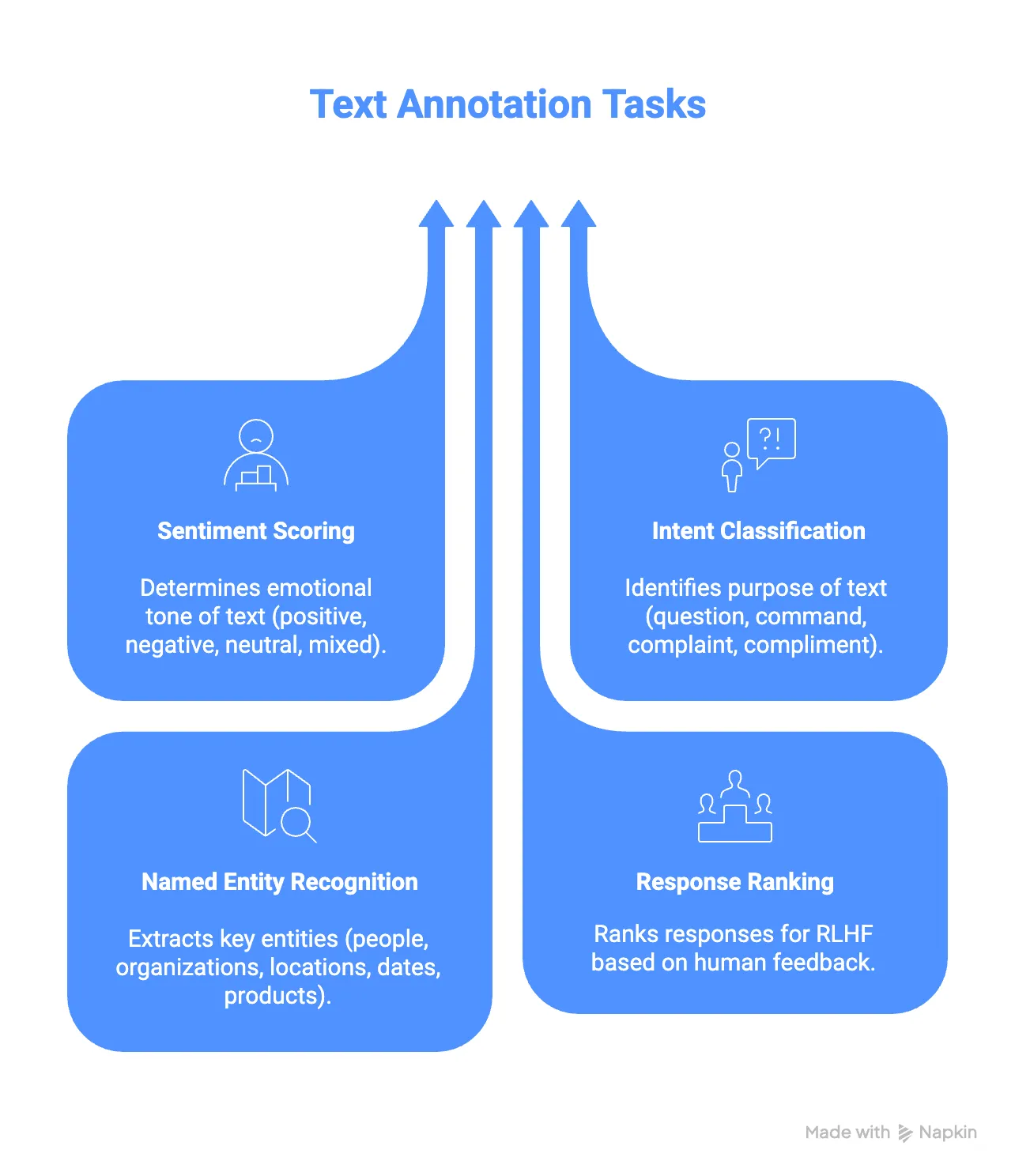

Text annotation

Text annotation trains language models to understand human communication. You evaluate AI responses, identify entities, analyze sentiment, and rank multiple outputs.

A typical task might involve reviewing three AI-generated customer emails and scoring each for tone, accuracy, and usefulness. Your rankings teach the model which response style to prefer.

Common tasks include sentiment analysis, intent classification, named-entity recognition, and response ranking for reinforcement learning from human feedback.

Skills needed: Native-level language proficiency, strong reading comprehension, attention to nuance, and the ability to apply detailed guidelines consistently.

Image and video annotation

Computer vision models rely on human-labeled visual data. You may draw bounding boxes, trace pixel-level boundaries, or track objects across video frames.

Simple tasks involve object detection. More advanced work includes semantic and instance segmentation, video tracking, and action classification. Precision matters far more than speed.

Skills needed: Strong visual acuity, spatial reasoning, consistency over long sessions, and comfort with detail-intensive work.

Audio annotation

Voice-based AI systems depend on accurate audio labeling. You transcribe speech, identify speakers, tag intent, and label background noise.

Multilingual and low-resource language expertise is especially valuable here, as automated systems perform poorly in those contexts.

Skills needed: Sharp listening ability, language fluency, and stamina for extended audio review.

Coding annotation

AI code generation tools require experienced developers to evaluate outputs. You review AI-written code, identify bugs, assess performance and security, and rank multiple implementations.

The work mirrors professional code review. You catch subtle logic flaws, security risks, and architectural issues that automation misses.

Skills needed: Professional programming experience, debugging expertise, understanding of code quality and security principles.

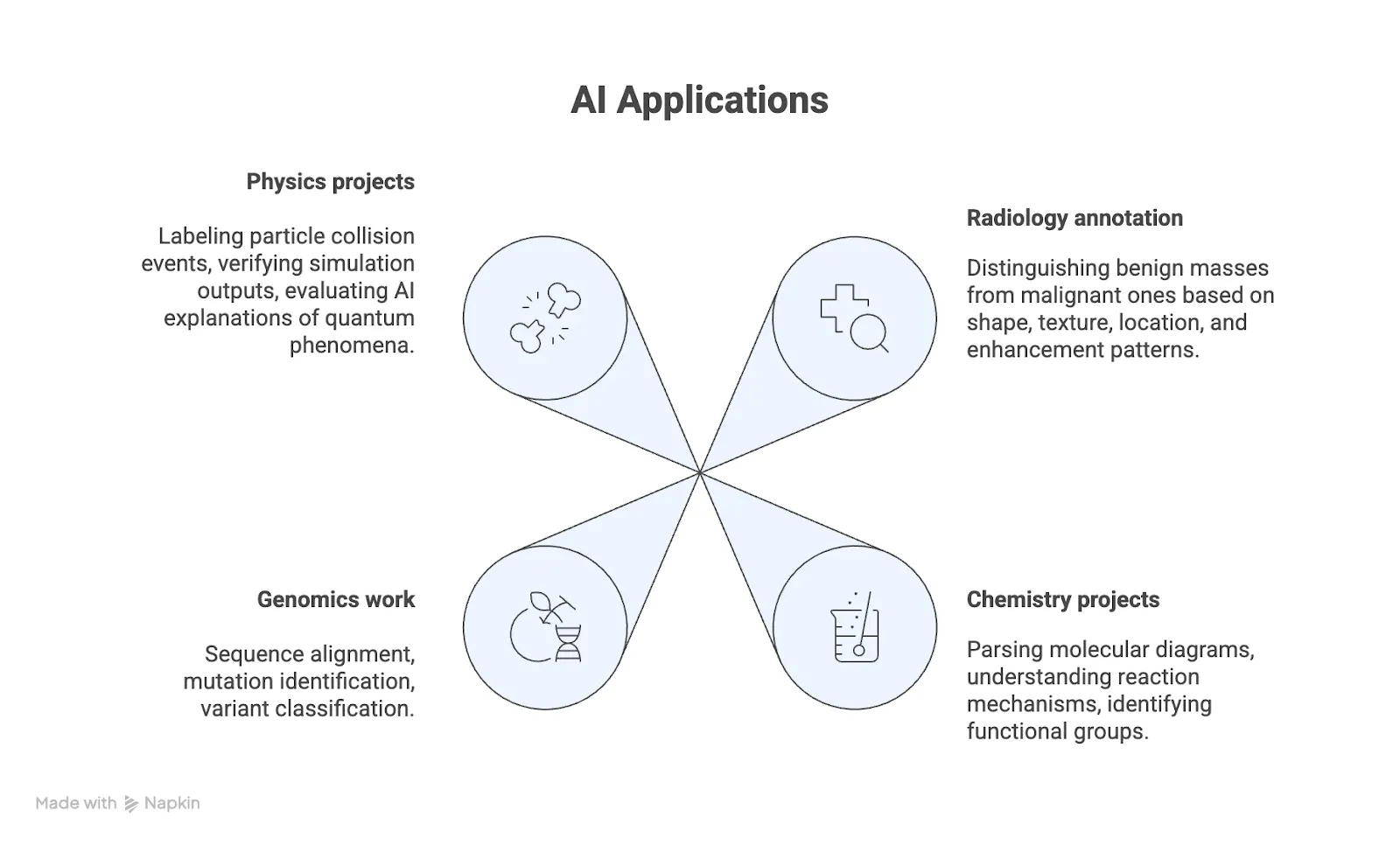

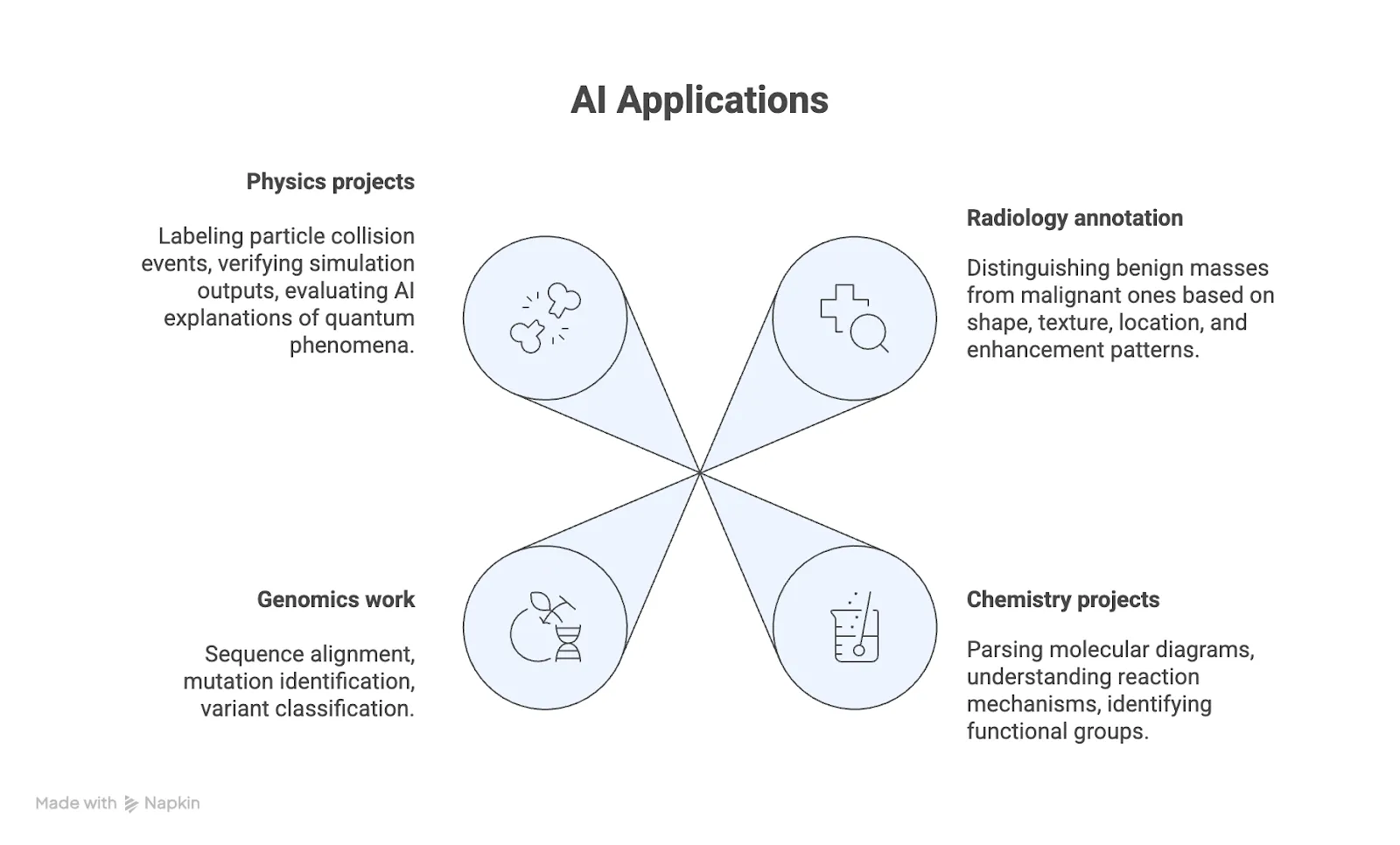

Specialized STEM and domain-specific annotation

Some datasets require deep scientific or technical expertise: medical imaging, genomics, chemistry, physics, and more.

You may work with specialized ontologies, evaluate simulations, or label complex scientific data. Errors here have real-world consequences.

Skills needed: Advanced degrees or equivalent professional experience, fluency in domain-specific terminology, and rigorous attention to accuracy.

LiDAR annotation

Autonomous systems rely on 3D point cloud data from LiDAR sensors. You label vehicles, pedestrians, and infrastructure in three dimensions, often with millimeter-level precision.

This work demands strong spatial reasoning and comfort with 3D visualization tools.

Skills needed: Spatial intuition, consistency across large datasets, and basic physics understanding.

Who qualifies for AI training work at Coral Mountain?

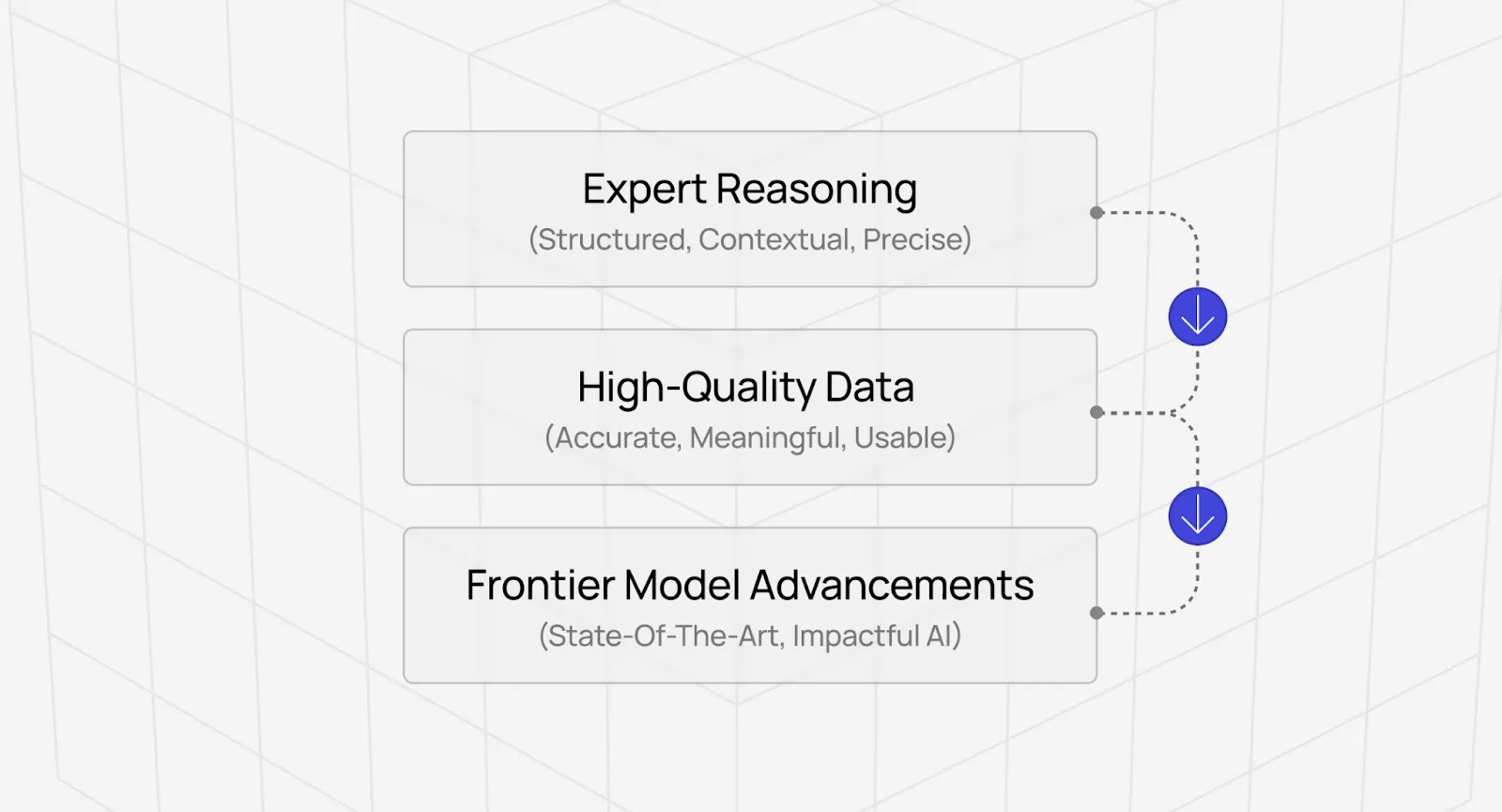

AI training at Coral Mountain is not mindless data entry. It is a critical bottleneck in frontier model development.

Every advanced model depends on human intelligence that algorithms cannot replace. As models become more capable, this dependence increases rather than disappears.

This work is well-suited for:

- Domain experts who want their expertise to matter

- Professionals who need flexible income without lowering intellectual standards

- Creative specialists who understand quality beyond surface correctness

- People motivated by contributing to AGI development

Your judgment transfers to systems operating at global scale.

How to get an AI training job

Coral Mountain uses a tiered qualification system based on demonstrated ability.

You begin with a Starter Assessment, typically taking about one hour. It is not a résumé screen-it evaluates whether you can actually do the work.

Once qualified, you gain access to projects aligned with your expertise:

- General projects starting at $20/hour

- Multilingual work starting at $20/hour

- Coding and STEM projects starting at $40/hour

- Professional credential projects starting at $50/hour

You choose when and how much you work. There are no minimum hours, no fixed schedules, and no penalties for stepping away.

The work fits your life-not the other way around.

Explore AI training work at Coral Mountain today

The difference between models that pass benchmarks and models that succeed in production lies in the quality of their training data.

If you have technical expertise, domain knowledge, or the judgment to catch what automated systems miss, AI training at Coral Mountain places you at the frontier of AI development.

Not as a button-clicker, but as someone whose decisions shape billion-dollar systems.

Getting started is simple:

- Visit the Coral Mountain application page

- Submit your background and availability

- Complete the Starter Assessment

- Receive your approval decision

- Select projects and begin earning

No fees. High standards. One assessment attempt.

Apply to Coral Mountain if you understand why quality beats volume in advancing frontier AI-and you have the expertise to contribute.

Coral Mountain Data is a data annotation and data collection company that provides high-quality data annotation services for Artificial Intelligence (AI) and Machine Learning (ML) models, ensuring reliable input datasets. Our annotation solutions include LiDAR point cloud data, enhancing the performance of AI and ML models. Coral Mountain Data provide high-quality data about coral reefs including sounds of coral reefs, marine life, waves, Vietnamese data…