What showed up on job boards today? Another $6-per-hour listing promising “flexible AI work from home.” Meanwhile, you’ve probably seen other platforms advertising $20 to $50+ per hour for AI training and wondered why the gap is so wide.

The difference isn’t marketing. It’s measurement.

Many platforms treat AI training as basic microtasks – clicking buttons, labeling images, transcribing short audio clips – and compensate accordingly.

We take a different approach.

At Coral Mountain, compensation reflects something deeper: the quality of your judgment. That judgment determines whether billion-dollar training runs produce models that truly reason well – or quietly optimize for the wrong objectives.

This guide explains what separates commodity microtasks from expert-level AI training: what premium platforms actually evaluate, how to demonstrate real judgment instead of just task completion, and why this work forms part of the infrastructure behind AGI development.

- Understand why AI training pays more than crowdwork

Some platforms advertise “AI training” at $6 per hour. Others offer 3x–8x that rate for work that sounds similar on the surface. The pay difference comes down to what’s being measured – and valued.

Why task-completion metrics lead to commodity wages

Crowdwork platforms measure output in binary terms:

Was the checkbox clicked? Was the image labeled? Was the clip transcribed?

When completion is the only measurable metric, the work becomes interchangeable. Anyone who can follow instructions competes for the same tasks. Pay naturally trends toward the lowest rate someone is willing to accept.

Speed becomes the primary differentiator. Volume becomes the business model. Quality becomes secondary.

Premium AI training platforms paying professional rates measure something else entirely: judgment quality.

- Does your feedback teach correct reasoning patterns – or reinforce superficial correlations?

- Can you detect when an AI answer sounds persuasive but misleads through omission?

- Can you identify edge cases where guidelines fail – and explain why?

These abilities determine whether advanced model training succeeds. That creates a completely different economic model.

The quality ceiling determines your earning potential

Think about the “quality ceiling.”

Drawing bounding boxes around cars has a low ceiling. A trained annotator and a capable teenager will produce nearly identical outputs. There’s little room for added expertise – and pay reflects that.

Now consider evaluating AI-generated reasoning. There’s effectively no ceiling.

As models become more sophisticated, evaluation becomes harder – not easier. You’re no longer checking format compliance. You’re assessing:

- Whether conclusions follow logically from premises

- Whether analogies genuinely support claims

- Whether reasoning holds up under scrutiny

That requires intellectual engagement that increases in value as models improve. The gap between “passing automated checks” and “advancing capabilities” widens over time.

Why measurement infrastructure determines pay

If a platform can’t clearly explain how it measures quality beyond “we trust experienced workers,” it’s likely operating on crowdwork economics – regardless of branding.

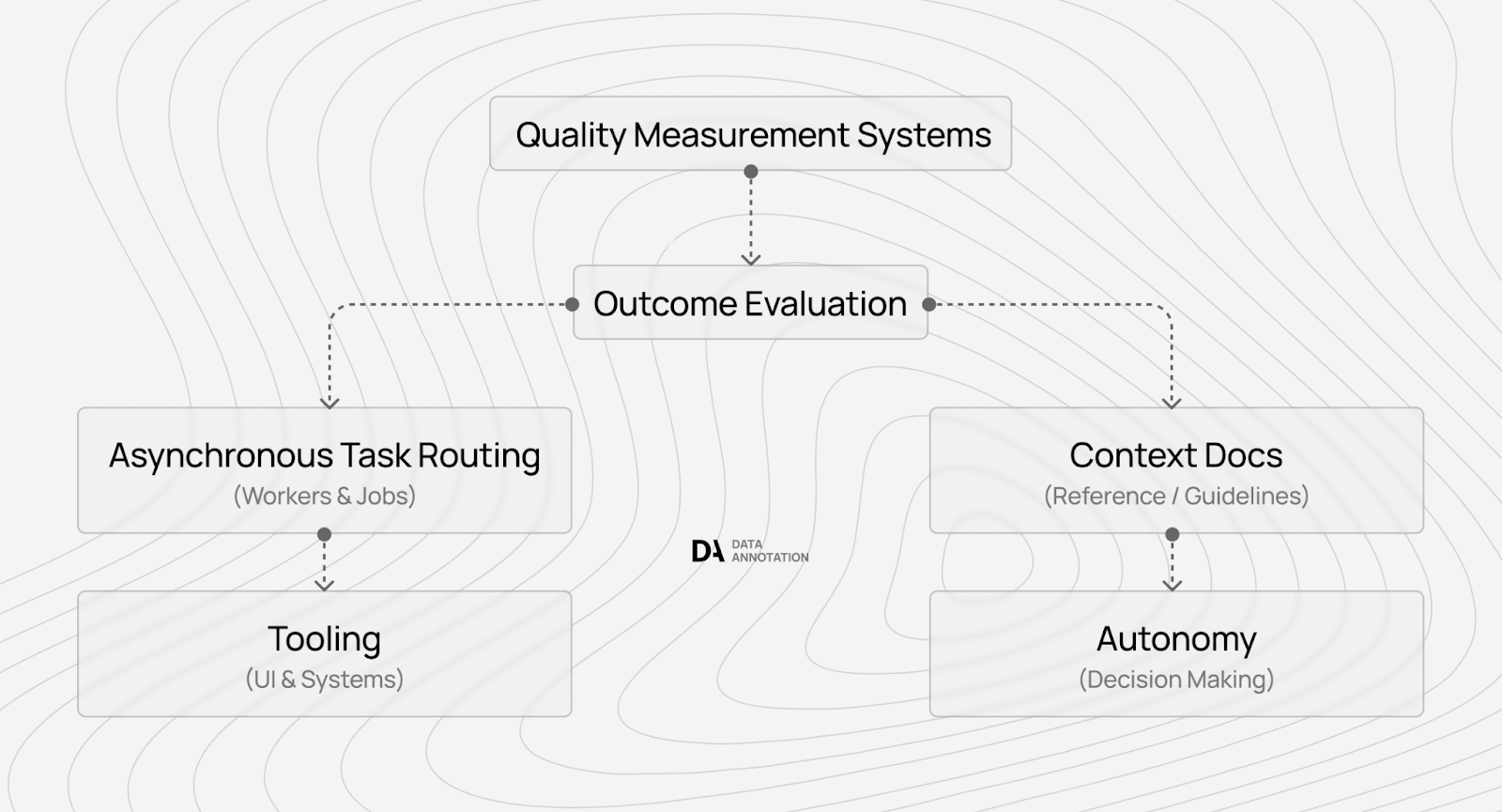

Professional rates require infrastructure that tracks and verifies quality.

When a platform can only measure “task completed: yes/no,” workers compete on price.

When it can measure how specific contributors improve model performance, it can justify higher hourly compensation.

That’s the difference between commodity work and professional AI training.

- Choose platforms with quality measurement (not just resume verification)

Many AI training platforms verify credentials during signup, then distribute tasks and hope for quality output.

Without real quality measurement, they default to low-cost labor and volume-based models – leading to the familiar $6/hour dynamic.

How commodity platforms rely on credential checks

Credential screening feels rigorous, but without performance tracking, it doesn’t protect quality.

Workers compete on willingness to accept lower rates rather than demonstrated capability.

What real quality measurement looks like

Platforms with true infrastructure track patterns over time:

- Do contributors apply reasoning systematically?

- Do they slow down appropriately on complex evaluations?

- Does their feedback correlate with improved model behavior?

This isn’t resume-based pricing. It’s performance-based pricing. Access to higher-compensation projects depends on demonstrated capability – not just listed qualifications.

Questions to evaluate a platform

Before applying, ask:

- How is work quality measured beyond credential checks?

- Is advancement based on time served or performance?

- How does the platform prove value to companies training frontier models?

Clear, technical answers suggest real infrastructure. Vague language about “team review” usually signals a body-shop model that struggles to support premium rates.

Universal red flags still apply:

- Upfront fees

- Crypto-only payment

- NDAs before rate disclosure

- No public track record

The key distinction is whether the platform can measure and verify quality – not just advertise it.

- Build demonstrated capabilities (credentials don’t predict performance)

Traditional career advice emphasizes accumulating credentials. For quality AI training work, that strategy often misses the point.

Credentials correlate weakly with the judgment quality premium platforms measure.

Why credentials often fail

Across domains, formal qualifications don’t always predict practical reasoning ability.

Technical degrees don’t guarantee production-ready thinking. Advanced humanities credentials don’t automatically translate into sharp analytical judgment.

AI training exposes this gap. A subject-matter expert may know the material but struggle to explain why an AI’s reasoning feels subtly wrong despite technical correctness.

Judgment, not credentials, determines performance.

What premium platforms evaluate

Platforms like Coral Mountain test for capabilities such as:

- Detecting subtle tonal shifts in AI-generated writing

- Identifying logical gaps without external prompts

- Maintaining consistency across large datasets

- Explaining why one response demonstrates insight while another merely sounds sophisticated

Starter Assessments emphasize:

- Writing precision

- Critical reasoning

- Sustained attention

- Principled judgment

These qualities predict success more reliably than resume lines.

Practice judgment, not just accumulation

Improvement comes from practicing structured reasoning:

- Compare two AI outputs and articulate which is stronger – and why

- Identify unsupported claims, weak analogies, or logical leaps

- Explain your evaluation process clearly and systematically

The goal isn’t overnight transformation. It’s demonstrating that your thinking produces value automated systems can’t replicate and credential-focused workers can’t consistently match.

4. Pass assessments that measure judgment (not speed)

Most qualification tests measure recall. Premium AI training assessments measure reasoning under ambiguity.

They evaluate how you think when guidelines don’t explicitly cover a case.

At Coral Mountain, speed isn’t the priority. Quality is.

What assessments actually measure

They test whether you can:

- Apply principles to novel situations

- Articulate reasoning clearly for asynchronous review

- Recognize when deeper analysis is required

- Maintain consistency across varied scenarios

Most assessments take 60–90 minutes. Specialized tracks may require up to two hours. The duration reflects real project conditions – sustained focus and quality matter.

Track-specific evaluation

We offer Starter Assessments aligned with expertise levels:

- General track: writing clarity and reasoning ($20+ per hour)

- Coding track: programming and code review ($40+)

- STEM track: math, chemistry, biology, physics ($40+)

- Professional track: law, finance, medicine ($50+)

- Language track: multilingual fluency ($20+)

How to prepare effectively

Avoid:

- Rushing to finish

- Guessing what the “correct answer” might be

- Demonstrating knowledge breadth without depth

Instead:

- Make your reasoning transparent

- Highlight ambiguity and justify decisions

- Engage with underlying principles, not surface rules

For example, if guidelines say “prefer factual accuracy,” a surface-level evaluator might reward citations regardless of relevance.

A judgment-focused evaluator recognizes when citations create false credibility because they don’t actually support claims – and explains why that matters.

That distinction separates checkbox compliance from principled reasoning.

Explore AI training projects at Coral Mountain

Many platforms position AI training as gig work. At Coral Mountain, it functions as infrastructure supporting frontier model development.

When you evaluate AI-generated code or reasoning, your decisions influence how models balance helpfulness and truthfulness, how they handle ambiguity, and whether their reasoning generalizes beyond pattern memorization.

If you’re ready to apply:

- Visit the Coral Mountain application page and click “Apply.”

- Complete the short background and availability form.

- Take the Starter Assessment.

- Check your inbox for a decision (typically within a few days).

- Access your dashboard, select your first project, and begin earning.

There are no signup fees. Selectivity ensures quality standards remain high.

Note: The Starter Assessment can only be taken once – prepare carefully before beginning.

If you understand why quality matters more than volume in advancing frontier AI – and you can demonstrate the judgment to match – Coral Mountain is built for you.