Scroll through AI trainer job listings and you’ll notice a pattern: advanced degrees preferred, machine learning background required, strong analytical skills essential. On paper, it sounds like an academic fellowship.

Yet when candidates actually go through assessments, the platforms measure something very different.

This gap explains why some PhD holders fail qualification tests while self-taught programmers pass – and why someone who reads deeply and writes consistently can sometimes create stronger training data than an English literature PhD.

Below, we break down what truly predicts performance (judgment quality, domain fluency, systematic reasoning), what tends to be overrated (credentials, ML theory, generic “analytical skills”), and how assessment-based hiring actually works.

The AI training requirements that don’t predict quality

Many postings emphasize credentials and technical knowledge that look impressive but reveal little about real-world training performance. After reviewing thousands of AI trainer outcomes, three patterns consistently fail to predict high-quality data – and occasionally correlate with worse results.

Machine learning knowledge

Common belief: Trainers need strong ML fundamentals – model architectures, loss functions, training pipelines.

Reality: Deep ML knowledge can sometimes interfere with training performance.

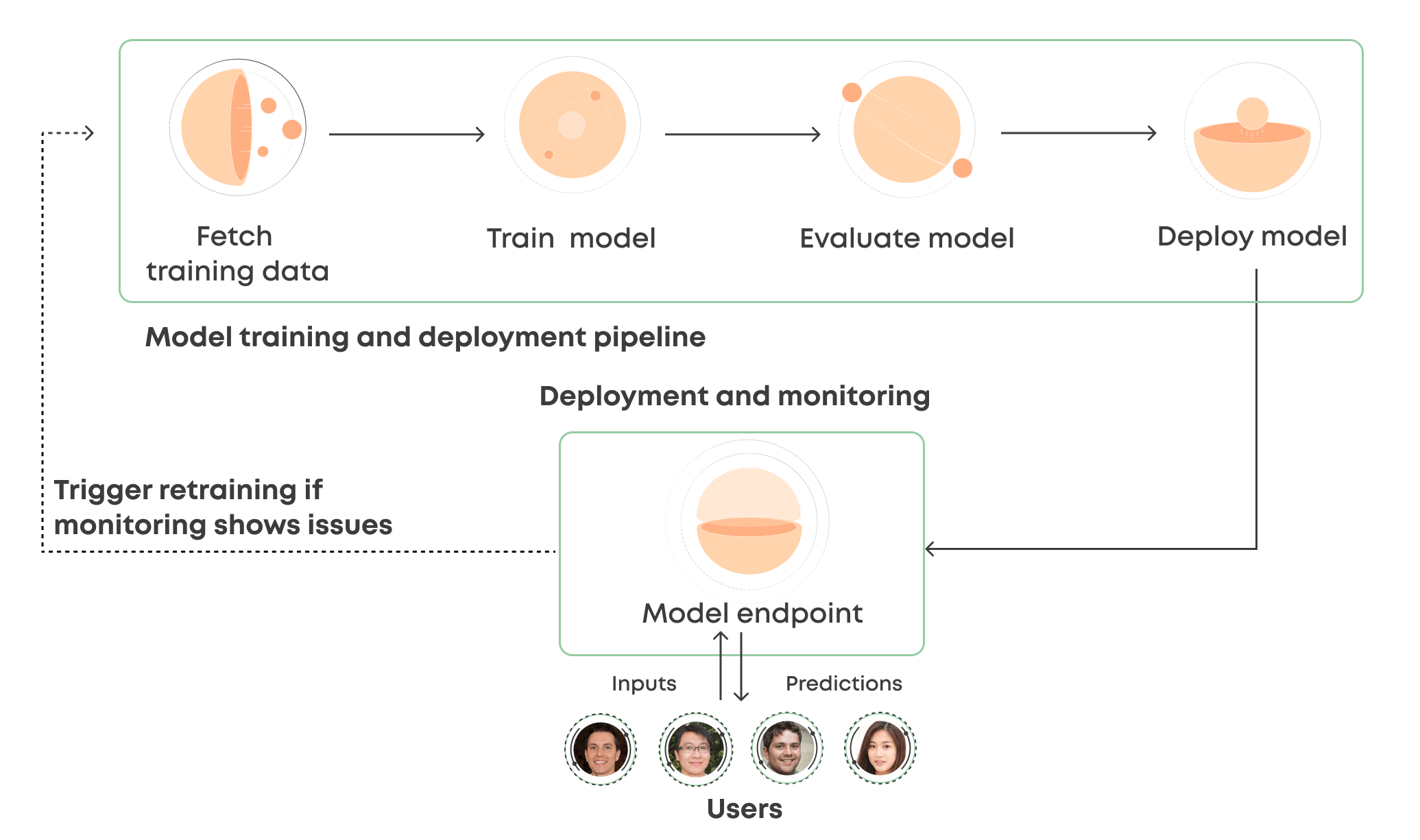

Why? Because individuals trained in ML often optimize for the metrics they were taught to value: benchmark scores, loss reduction, inter-annotator agreement. These are useful in research settings – but they don’t reliably predict whether models behave well in production.

The issue intensifies when trainers know enough to unintentionally game evaluation systems. They notice that longer responses may be rated higher. Adding formatting or emojis might increase preference scores. Optimizing for these superficial signals can produce models that look polished but reason poorly.

What actually matters: Understanding the training–inference gap – why models that excel on benchmarks can fail in real-world applications. That requires judgment about objectives and outcomes, not architectural expertise.

Advanced degrees

Common belief: PhDs bring rigor, depth, and analytical strength.

Reality: Credentials show academic achievement – not necessarily evaluative skill.

History offers countless examples of influential writers without formal credentials and credentialed experts who struggle with practical output. The same pattern appears in AI training.

For creative and evaluative tasks, the credential–quality gap widens. When training a model to write poetry about the moon, a literature PhD doesn’t automatically predict who can distinguish between a vivid, image-driven haiku and a generic verse about “the moon’s pale glow.”

Individuals who read widely, write often, and develop taste through exposure frequently outperform those relying solely on academic background.

What actually matters: Domain fluency combined with practical intuition – the creative judgment and resilience to solve ambiguous problems credentials don’t capture.

Generic analytical skills: missing the point

Common belief: Logical reasoning, attention to detail, and systematic thinking are sufficient.

Reality: These skills are necessary – but incomplete.

Many job descriptions emphasize breaking down complex problems and identifying patterns. That’s foundational. The more advanced skill is recognizing when you’re reinforcing the wrong patterns – when a model is optimizing for what looks impressive rather than what fulfills the objective.

Being able to compare two responses doesn’t guarantee you can determine whether either one is correct.

What actually matters: Quality judgment – the ability to separate “appears sophisticated” from “actually achieves the goal.” This includes recognizing when your own evaluation patterns could steer models in the wrong direction.

The AI trainer requirements that matter for AGI

The abilities that predict high-quality training data are difficult to screen using resumes. Four competencies consistently separate trainers who meaningfully advance frontier models from those who unintentionally waste compute optimizing the wrong objectives.

Understanding quality ceilings

Different tasks have different quality ceilings – and ceiling height determines how much expertise influences outcomes.

Drawing a bounding box around a car has a low ceiling. Almost anyone can perform it adequately. Expertise adds limited incremental value.

Writing poetry about the moon has no clear ceiling. Nobel laureates and high school students produce fundamentally different outputs. Perspective, craft, and voice matter.

As AI systems grow more capable, tasks shift upward in ceiling height. Models don’t need help with simple classification; they need nuanced feedback on creativity, reasoning, ambiguity handling, and subjective quality dimensions.

Trainers who understand quality ceilings know where expertise compounds – and where tasks can be automated.

Objective vs. subjective judgment

Low-ceiling tasks can rely on mechanical consistency: checkboxes, rubrics, agreement metrics.

Higher-ceiling tasks cannot.

Imagine evaluating three poems about the moon:

- A minimalist haiku capturing reflected light

- A structured poem built on internal rhyme

- A reflective piece exploring emotional symbolism

There is no single objectively “correct” winner. Each demonstrates value differently.

When trainers force artificial objectivity (chasing inter-annotator agreement at all costs), models converge toward bland, generic outputs. When trainers allow principled subjectivity, models learn richer patterns.

High-quality trainers understand when diversity of judgment enhances learning – and when strict consistency matters.

Visceral understanding of data

Many trainers rely on metrics without deeply examining examples.

They track loss curves and preference scores – but rarely read long sequences of outputs to detect systematic drift. They don’t compare examples side by side to observe subtle differences. They miss patterns automated filters may suppress.

This creates blind spots.

Developing a visceral sense of data means:

- Reading hundreds of examples directly

- Comparing your judgments with other skilled evaluators

- Identifying where initial instincts were wrong

- Recognizing patterns before metrics confirm them

It’s a cultivated intuition – and a strong predictor of quality.

Scalable oversight intuition

As models exceed human performance on benchmarks, training evolves.

Instead of writing every example manually, skilled trainers refine model drafts. Instead of labeling every edge case, they identify recurring failure patterns and design targeted interventions.

Scalable oversight means knowing:

- When your creativity adds value

- When your evaluative judgment is essential

- When AI assistance improves efficiency without sacrificing quality

This ability expands your impact – and it’s difficult to measure through resumes alone.

How to develop what AI training requires

You can’t earn a degree in judgment. You build it deliberately.

Build quality judgment through comparative analysis

Systematically compare examples across quality levels.

Ask:

- Why did the expert choose that annotation?

- What did the novice overlook?

- Which details are meaningful versus distracting?

For language tasks, compare outputs from different models or settings. Identify responses that appear fluent but contain subtle flaws.

For technical tasks, study code that passes tests yet violates best practices. Analyze arguments that cite sources but draw unsupported conclusions.

Over time, this comparative habit develops taste – recognizing quality before metrics confirm it.

Practice identifying measurement failure

Metrics often optimize the wrong target.

Look for cases where:

- Automated scores are high but obvious flaws remain

- Annotator agreement is strong but both miss the same issue

- Benchmark success doesn’t translate to real-world reliability

- Synthetic training data causes model degradation

These divergences build skepticism toward surface-level indicators and sharpen intuition about objective alignment.

Develop domain fluency beyond credentials

Credentials may provide legitimacy, but fluency comes from immersion.

Strengthen it through:

- Reading primary sources (legal opinions, proofs, clinical documentation)

- Studying unusual edge cases

- Building cross-domain connections

The most effective experts often see how ideas transfer across disciplines.

Cultivate contrarian instincts

Valuable trainers question consensus.

When everyone optimizes for a benchmark, ask what it overlooks.

When speed is prioritized, consider the hidden quality costs.

When metrics simplify evaluation, examine the nuance they discard.

This isn’t about rejecting standards. It’s about distinguishing what works from what merely appears effective – and having the confidence to advocate for better approaches.

How to get an AI training job?

High-quality AI training demands awareness that every judgment compounds through the pipeline and ultimately shapes systems used by millions.

At Coral Mountain, we use a tiered qualification system that validates demonstrated performance – not resumes.

Entry begins with a Starter Assessment, typically about an hour long. It’s not a credential screen. It’s a practical evaluation of whether you can perform the work.

Successful candidates enter a compensation structure aligned with expertise:

- General projects: From $20/hour for chatbot evaluation and prompt writing

- Multilingual projects: From $20/hour for translation and localization

- Coding projects: From $40/hour for code evaluation across major languages

- STEM projects: From $40/hour for advanced domain tasks

- Professional projects: From $50/hour for law, finance, or medical expertise

After qualification, you select projects from a dashboard tailored to your expertise level. Each listing outlines requirements, scope, and deliverables.

Work hours are flexible. There are no minimum commitments or mandatory schedules. You work when it fits your life.

Explore an AI trainer job at Coral Mountain

For those seeking meaningful work, AI training is more than annotation. It’s shaping systems that expand what technology can accomplish.

At Coral Mountain, your expertise – whether domain knowledge, evaluative precision, creativity, or structured reasoning – directly influences model capability.

Getting started takes five steps:

- Visit the Coral Mountain application page and click “Apply.”

- Complete the short background and availability form.

- Take the Starter Assessment.

- Check your inbox for the decision (typically within a few days).

- Log in, select your first project, and begin earning.

There are no signup fees. Selectivity ensures quality standards remain high. The Starter Assessment can only be taken once, so prepare thoroughly.

Apply to Coral Mountain if you understand why quality consistently outperforms volume in advancing frontier AI – and you’re ready to contribute at that level.