You’ve likely seen this happen: you give a model like ChatGPT or Claude two examples of what you want, and suddenly everything clicks. The third output matches your format, tone, and intent almost perfectly. No retraining. No fine-tuning. You simply showed the model what “good” looks like.

That capability is called in-context learning.

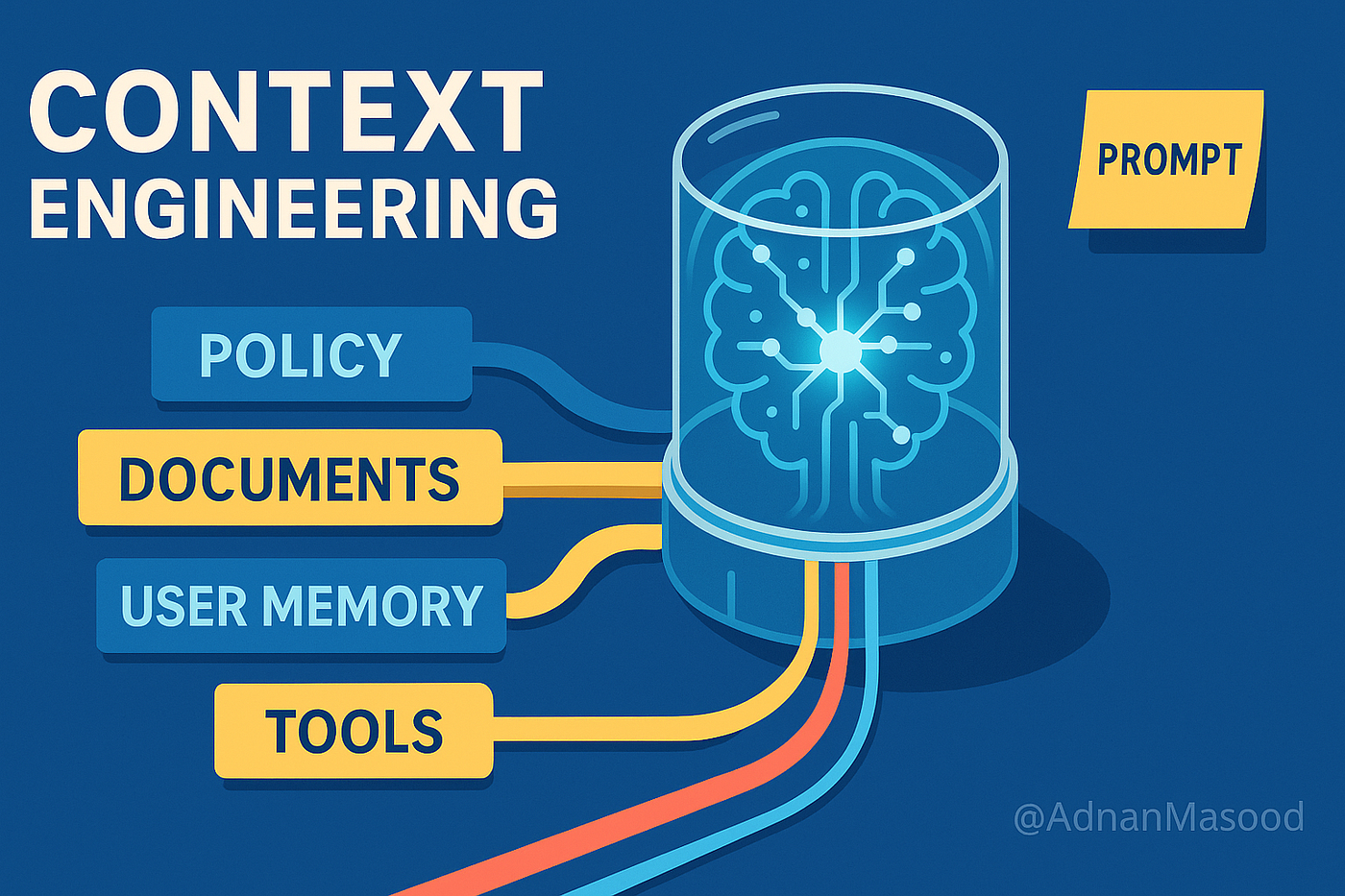

In-context learning has fundamentally reshaped how AI systems are deployed. It has also created an entire category of work that barely existed just a few years ago.

What is in-context learning (ICL)?

In-context learning refers to a large language model’s ability to perform a task by learning directly from examples embedded in the prompt, without any changes to its parameters or weights. You provide a few demonstrations, and within a single forward pass, the model generalizes to new cases.

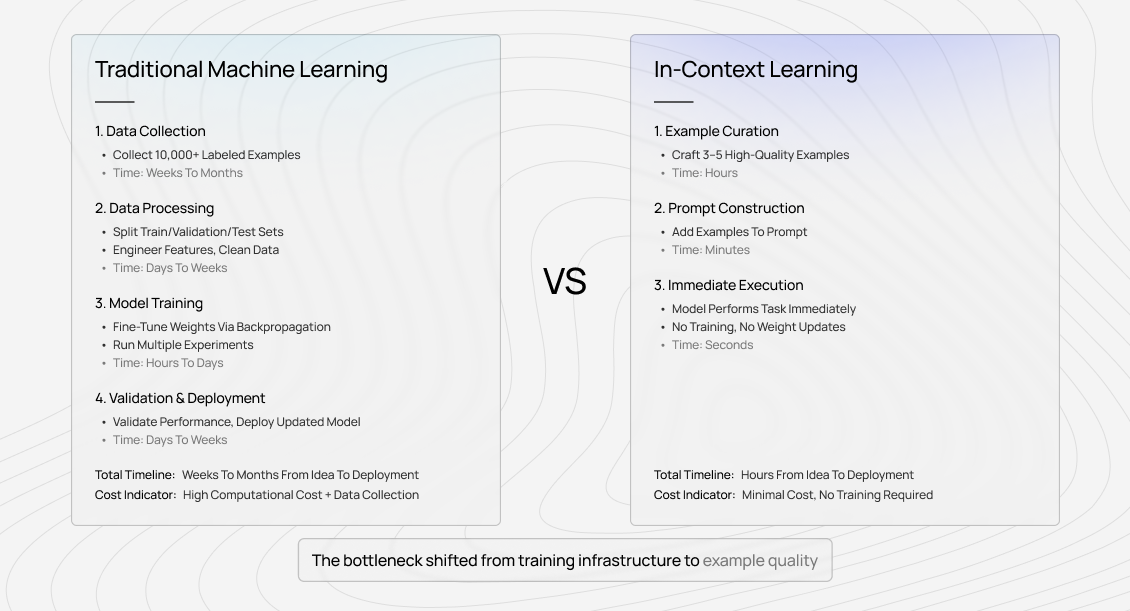

Traditional machine learning depends on training pipelines: large labeled datasets, gradient descent, repeated optimization, and validation cycles. In-context learning bypasses all of that. The model identifies the pattern in your examples and applies it immediately.

The key difference isn’t just speed. It’s that the model’s pre-trained knowledge-language, reasoning, task structure learned from trillions of tokens-can be steered toward a specific objective purely through examples.

You’re not modifying the model. You’re directing it.

Applications of in-context learning

In practice, in-context learning now appears across almost every domain where AI systems interact with specialized content.

It is widely used for classification tasks such as sentiment analysis, moderation, document tagging, and intent detection. A legal tech team, for example, can provide a handful of examples of “discovery-relevant” documents, and the model reliably applies that judgment across thousands of new files.

It also powers information extraction workflows: pulling entities, relationships, or structured fields from unstructured text. In healthcare, a few well-chosen examples of extracting dosage information from clinical notes can enable the model to handle variations it has never explicitly seen.

In constrained generation, teams rely on examples to enforce tone, formatting, and brand voice. Marketing groups often show a model how their writing should sound, and the model maintains that consistency across large volumes of content.

Translation and localization benefit as well, especially when standard translation isn’t enough. By providing examples of domain-specific phrasing or cultural adaptation, companies can guide models to produce outputs aligned with internal standards rather than generic translations.

Across all these use cases, the pattern is the same: performance depends on example quality. Generic demonstrations lead to generic outputs. Carefully designed, domain-aware examples produce reliable results.

Why in-context learning emerged from scale

In-context learning wasn’t deliberately engineered. It emerged as models grew larger.

When GPT-3 launched with 175 billion parameters, researchers observed something unexpected: the model could perform tasks it had never been explicitly trained on, simply by seeing examples in the prompt. Smaller models hinted at this ability, but only at larger scales did it become consistent enough for real-world use.

As model size increased, so did the reliability of this behavior. Larger models needed fewer examples, handled subtler distinctions, and generalized more effectively.

This wasn’t a new feature added to the architecture. It was a side effect of training on massive, diverse corpora where problems are frequently introduced alongside demonstrations. Over time, models learned meta-patterns: how examples signal rules, how instructions map to outputs, and how tasks are structured in natural language.

That emergence changed deployment strategies across the industry. Instead of maintaining many fine-tuned models, organizations could rely on a single foundation model and guide it through prompts. The constraint shifted from training infrastructure to the quality of examples.

How does in-context learning work in AI models?

While the full mechanism is still under active research, several components are well understood.

Attention patterns recognize task structure

When examples appear in a prompt, the model’s attention layers detect structural regularities: relationships between inputs and outputs, formatting patterns, and implicit rules. The model then compares the new query against those patterns.

Crucially, this learning is temporary. Once the interaction ends, the model retains nothing. Its weights never change; only the way attention is allocated within that single context.

Pre-training enables meta-learning

During pre-training, models are exposed to countless situations where examples precede solutions: tutorials, documentation, Q&A threads, research papers. The model internalizes statistical patterns associated with “learning from demonstration.”

When you include examples in a prompt, you’re activating those learned patterns. The model recognizes the structure and responds accordingly.

Context processing without weight updates

No gradients are computed. No parameters are updated. The model applies existing knowledge to the current context. This also defines a hard limit: in-context learning cannot introduce truly novel concepts. It can only reorganize and apply what the model already knows.

That’s why example selection matters so much. You’re not teaching new knowledge-you’re triggering the right internal pathways.

Approaches for in-context learning

In practice, in-context learning falls into three broad modes.

Zero-shot learning

Zero-shot prompts provide instructions but no examples. This works well for tasks that closely resemble patterns already present in training data, such as basic sentiment analysis or summarization.

However, zero-shot approaches break down when tasks involve specialized terminology, unusual formats, or nuanced distinctions. The ceiling is fixed by pre-training; if the model hasn’t seen similar patterns before, performance stalls.

Few-shot learning

Few-shot learning-typically two to ten examples-is the most common and effective approach. Here, example quality dominates outcomes.

Small changes in example selection can lead to large performance differences. Expert-curated examples that highlight edge cases consistently outperform randomly chosen demonstrations.

Few-shot examples implicitly communicate what matters. They teach the model where ambiguity exists and how to resolve it.

Many-shot learning

As context windows expanded, many-shot learning became possible. Providing dozens or even hundreds of examples can improve performance for complex tasks-up to a point.

Beyond that point, returns diminish. Redundant examples introduce noise rather than clarity. The deciding factor is diversity, not volume. A small number of strategically chosen examples often outperform large collections of similar ones.

Where in-context learning breaks down

Despite its elegance, in-context learning has clear limitations.

Context window constraints remain binding. Even with very large windows, teams often must choose between including full inputs or comprehensive examples. Truncation can silently undermine performance.

Another challenge is inconsistency. Prompts that perform well in testing may fail unpredictably in production. This often traces back to insufficient coverage in the examples rather than a flaw in the model.

Over time, example drift becomes a bottleneck. As real-world inputs evolve, static examples no longer represent reality. Performance declines-not because the model changed, but because the examples stopped matching the task distribution.

When models need actual training

In-context learning has a ceiling.

Tasks with novel structures unseen during pre-training often require fine-tuning. Scenarios that demand consistent behavior across sessions also favor training, since in-context learning is inherently temporary.

Complex multi-step reasoning processes can become impractical to encode through examples alone. When prompts grow longer but results plateau, it’s usually a signal that training-not prompting-is required.

How in-context learning changed AI training roles

In-context learning created new roles centered on prompt design and example curation. Success in these roles depends less on writing skill and more on teaching intuition: knowing which examples clarify a task and which obscure it.

Domain expertise is especially valuable. Experts recognize edge cases that non-specialists miss, and those edge cases often determine whether a system succeeds in production.

The skills that matter for in-context learning work

Strong practitioners excel at identifying representative patterns, selecting instructive edge cases, and evaluating outputs for true correctness rather than surface fluency.

As models become more capable, the need for human judgment doesn’t disappear-it becomes more concentrated and more valuable.

Contribute to AGI development at Coral Mountain

In-context learning has transformed AI deployment, but it has not removed the need for human expertise. It has shifted that need toward example quality, judgment, and domain understanding.

At Coral Mountain, this work sits at the center of frontier AI development. Contributors with technical knowledge, domain expertise, and strong critical thinking help determine whether in-context learning succeeds or fails in real systems.

Thousands of remote contributors already support this ecosystem.

To get started:

- Visit the Coral Mountain application page

- Submit your background and availability

- Complete the starter assessment

- Receive an approval decision

- Begin working on active projects

No fees. High standards. Real impact.

Apply to Coral Mountain if you understand why quality matters more than volume in advancing AI-and if you have the expertise to contribute.

Coral Mountain Data is a data annotation and data collection company that provides high-quality data annotation services for Artificial Intelligence (AI) and Machine Learning (ML) models, ensuring reliable input datasets. Our annotation solutions include LiDAR point cloud data, enhancing the performance of AI and ML models. Coral Mountain Data provide high-quality data about coral reefs including sounds of coral reefs, marine life, waves, Vietnamese data…