You want to work from home, but most remote listings feel suspicious. One promises $10 per hour for AI training tasks you can supposedly do from your couch. Another offers $15 for what appears to be the same work. A third asks for $45 upfront for “certification materials” before you can even apply.

Here’s the reality few job guides explain: most platforms advertising remote data annotation cannot truly maintain quality at scale. They compete on cost, not capability. And remote work without strong measurement systems quickly becomes inconsistent and low-value.

Platforms like Coral Mountain don’t treat flexibility as a perk. They built technology that tracks quality across thousands of contributors working in different time zones.

That infrastructure is expensive to develop – but it enables premium pricing because it proves measurable value to AI companies building frontier models. Understanding this difference determines whether you access legitimate $20–$50+ per hour work or spend months on low-paid microtasks.

This guide explains why remote AI training differs from office-based annotation, what remote platforms actually evaluate, and why working with technology-driven companies places you closer to the infrastructure layer of global AGI development rather than the gig economy.

1. Understand why remote AI training requires technology (not just trust workers)

Many people assume remote work simply means “the same job in a different location.” That might be true for data entry or customer service. It is not true for AI training that influences how frontier models reason, interpret language, and make decisions.

Remote AI training demands infrastructure that most platforms simply do not have.

Why proximity-based quality control fails remotely

Historically, in-person annotation relied on physical supervision.

Managers could observe screens, answer questions instantly, and notice when someone rushed or misunderstood instructions. Quality control relied on proximity.

This model works when workers are in the same location. It breaks down entirely when they are distributed globally.

In remote settings, no one walks past your desk. No one corrects misunderstandings in real time. Without systematic quality measurement, output becomes inconsistent because there is no structured feedback loop connecting individual performance to platform standards.

That’s why many platforms pay $5–$10 per hour. They verify credentials, grant access, and hope performance is adequate. Since they cannot prove their data quality is superior, they compete on price.

The infrastructure gap between $5/hour and $20/hour remote work

Platforms that successfully pay $20 to $50+ per hour invest in technology that outperforms in-person supervision.

Sophisticated measurement systems detect quality patterns across thousands of contributors – patterns no single manager could reliably track.

At Coral Mountain, tiered compensation (from $20+ for general work, $40+ for coding and STEM, and $50+ for professional credentials) reflects measured expertise, not arbitrary pricing.

The system identifies contributors who demonstrate true capability through their output, not through résumé keywords. That measurable value allows Coral Mountain to support frontier models developed by leading AI systems.

The practical takeaway: if onboarding focuses only on credential checks without testing demonstrated ability, the platform is predicting performance from résumés rather than measuring real output. That model rarely succeeds in remote environments.

- Build self-direction capabilities that remote work actually tests

Remote AI platforms evaluate more than knowledge. They assess whether you can maintain high standards independently, without constant oversight.

What disappears without real-time supervision

In an office, you can ask quick questions, clarify ambiguous cases, or observe how colleagues approach tasks.

Remote work removes those supports.

You must interpret guidelines independently. You must apply principles to new situations. You must sustain consistent reasoning without immediate validation.

These aren’t obstacles – they are capability filters.

Frontier model training increasingly requires annotators who deeply understand principles, extrapolate beyond explicit examples, and recognize when a case demands thirty minutes of careful thought rather than five minutes of surface review.

In-person supervision can compensate for shallow understanding. Remote environments cannot.

The capabilities remote platforms measure

Remote AI training environments test:

- Writing precision that detects subtle tone shifts

- Critical reasoning without peer discussion

- Consistency across large datasets

- Judgment when guidelines don’t explicitly cover a case

Developing these skills requires deliberate practice with ambiguity. When guidelines don’t provide a direct answer, strong contributors articulate the principles involved and reason through trade-offs instead of searching for shortcuts.

For those transitioning from office or gig work, this is the steepest adjustment. The goal is not simply to understand good annotation – it is to maintain those standards consistently and independently.

Contributors who develop strong self-direction become more valuable over time. They require less oversight, identify edge cases proactively, and sustain quality that compounds across projects.

3. Choose platforms where technology enables remote quality measurement

Remote annotation economics are simple: platforms without measurement systems compete on price and pay low wages. Platforms with advanced measurement infrastructure can prove value to clients and justify premium rates.

Understanding this difference prevents wasted time.

Why measurement infrastructure determines pricing power

Commodity platforms emphasize credential verification: degrees, years of experience, prior employers.

This approach works better in supervised office settings. It fails remotely, where credentials alone weakly predict performance.

Such platforms typically offer $5–$15 per hour because they cannot reliably differentiate high-quality contributors from average ones.

Technology-focused companies like Coral Mountain operate differently. Tier advancement is performance-based, not time-based. Contributors move up because their output demonstrates capability.

Measurement systems detect contributors who consistently catch edge cases, provide nuanced reasoning, or identify issues automated validators miss. These contributors can command $40–$50+ per hour because their feedback reduces costly model training errors.

Without measurement systems, platforms cannot identify these differences, so everyone is paid the same low rate regardless of skill.

How global access raises the quality ceiling

Remote work eliminates geographic limits.

Coral Mountain coordinates contributors across continents and time zones, accessing expertise that could never be concentrated in one office.

This raises the quality ceiling rather than lowering it. Experts from different fields and regions contribute through shared infrastructure.

Before applying, evaluate whether a platform can clearly explain:

- How work quality is measured beyond initial screening

- Whether tier progression is performance-based

- How they demonstrate value to AI clients

Specific answers involving technology and measurement indicate legitimacy. Vague references to “team review” or “industry standards” often signal commodity operations.

4. Pass assessments simulating actual working conditions

Remote assessments reveal what platforms truly value.

Office-oriented platforms test whether you can follow instructions with guidance available. Remote-first platforms test whether you can reason effectively in isolation.

At Coral Mountain, Starter Assessments simulate real remote conditions:

- Completed independently

- Contain ambiguous cases

- Evaluate reasoning transparency

- Measure time allocation judgment

Assessment tracks align with compensation tiers:

- General track: writing clarity, logic, detail orientation ($20+)

- Language track: fluency for multilingual projects ($20+)

- Coding track: programming and evaluation skills ($40+)

- STEM track: domain reasoning quality ($40+)

- Professional track: regulatory and contextual judgment ($50+)

Most assessments require 60–90 minutes. Specialized tracks may require up to two hours. This duration reflects real project demands and tests sustained focus.

Why reasoning matters more than “correct answers”

Remote assessments prioritize how you think.

When encountering ambiguous cases, platforms evaluate whether you apply principles systematically rather than guessing what seems correct.

Candidates often fail by rushing, avoiding complex cases, or trying to reverse-engineer expected answers.

Remote environments reward contributors who recognize when depth matters more than speed. Measurement systems can evaluate output quality, but they cannot correct flawed reasoning in real time.

The geographic flexibility of remote work also allows you to take assessments during your peak focus hours – an advantage unavailable in office-based testing centers.

5. Scale through expertise depth, not work volume

In commodity work, income scales with hours worked. In premium AI training, income scales with expertise depth.

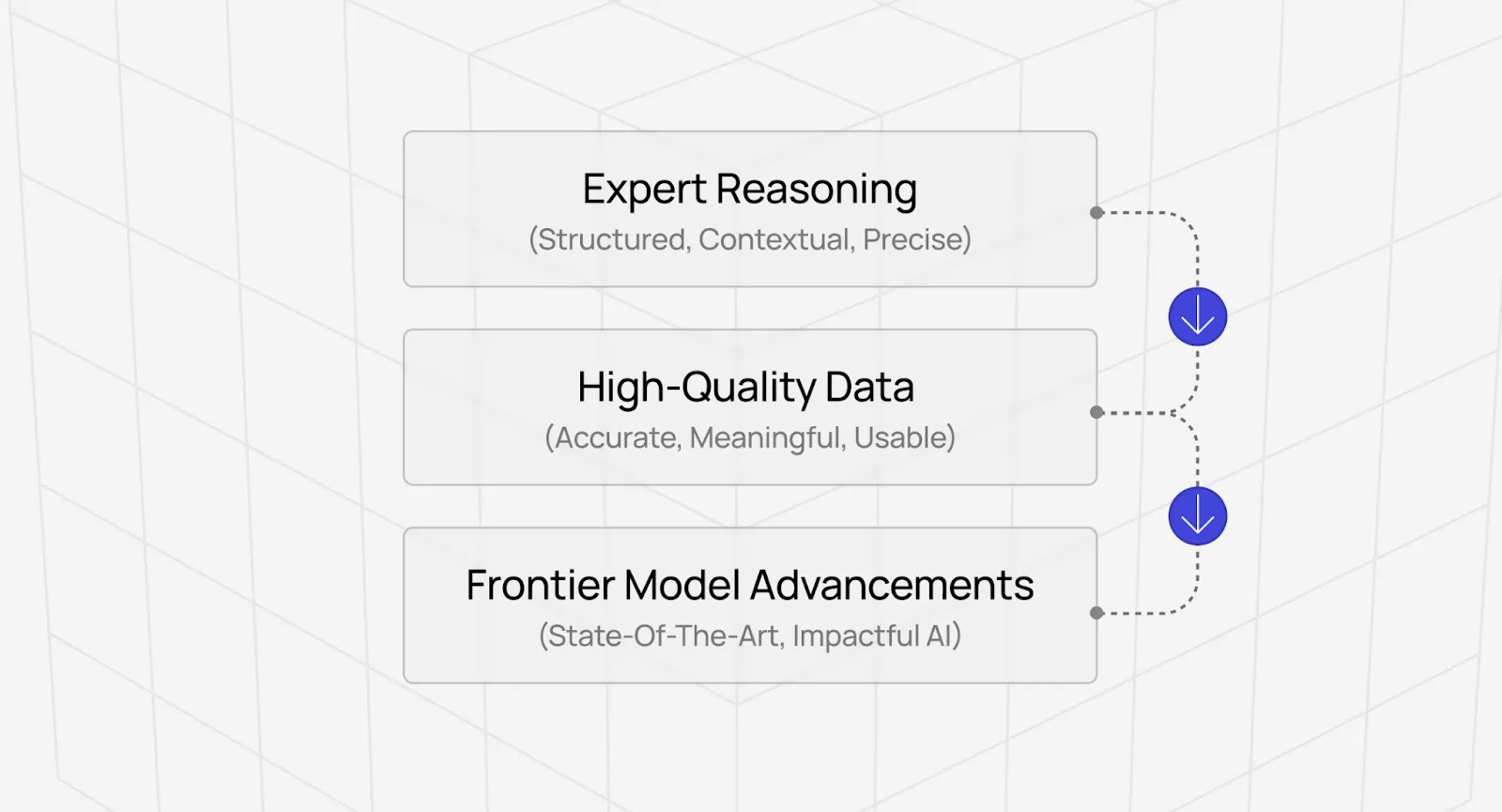

As AI models become more sophisticated, the work shifts toward tasks that automation cannot yet perform.

Earlier annotation tasks (like simple sentiment labeling) had low complexity and high automation risk.

Today’s frontier tasks involve evaluating reasoning chains, identifying subtle logical flaws, and refining multi-step outputs – work that trains the next generation of models.

What tier structures reveal

Coral Mountain’s tiers reflect measurement reality:

- $20+: consistent writing and critical thinking

- $40+: specialized coding or STEM reasoning

- $50+: professional judgment in regulated fields

Higher tiers correspond to rarer, verifiable expertise.

Rather than attempting to learn entirely new fields, identify where your existing background provides an advantage.

- Coders recognize technical debt and design quality.

- STEM professionals assess reasoning integrity.

- Legal or financial professionals apply contextual and ethical standards.

Preparation involves refreshing fundamentals and focusing on quality evaluation, not memorizing new facts.

As models advance, the gap widens between “passing automated checks” and “genuinely improving model capabilities.” That gap is where expert contributors create value.

Explore premium AI training jobs at Coral Mountain

Many platforms position AI training as gig work. Coral Mountain operates closer to the infrastructure layer of AGI development, where contributor judgment influences how models reason, balance trade-offs, and generalize knowledge.

Your evaluations shape systems that millions of people may interact with.

Getting started involves five steps:

- Visit the Coral Mountain application page and click “Apply”

- Submit your background and availability

- Complete the Starter Assessment

- Await the decision email

- Log in, choose projects, and begin earning

There are no signup fees. The platform maintains selective standards to preserve quality. The Starter Assessment can only be taken once, so preparation matters.

Apply to Coral Mountain if you understand why quality consistently outweighs volume in advancing frontier AI – and if you have the expertise to contribute.

Coral Mountain Data is a data annotation and data collection company that provides high-quality data annotation services for Artificial Intelligence (AI) and Machine Learning (ML) models, ensuring reliable input datasets. Our annotation solutions include LiDAR point cloud data, enhancing the performance of AI and ML models. Coral Mountain Data provide high-quality data about coral reefs including sounds of coral reefs, marine life, waves, Vietnamese data…